Here you'll find common questions regarding WyvernChat, chatting, LLMs, and anything else in the world of AI roleplay.

¶ General WyvernChat

¶ ❓I set my new character to public, but I don't see it on Explore!

If your character wasn't approved by our automod, it will be reviewed by our volunteer human moderation team for any rule-violating content. Reviews typically take no more than 12 hours, but can take longer if we're under heavy load.

¶ ❓I had WyvernChat open in mutiple tabs, and it asked me to log in again. What happened?

Due to security reasons, we only allow WyvernChat to be open in one tab. Please close your new tabs and the website should function as normal.

¶ LLM Troubleshooting

¶ ❓I see this error: "This model is having trouble responding, please try a different model or wait for this one to become operational."

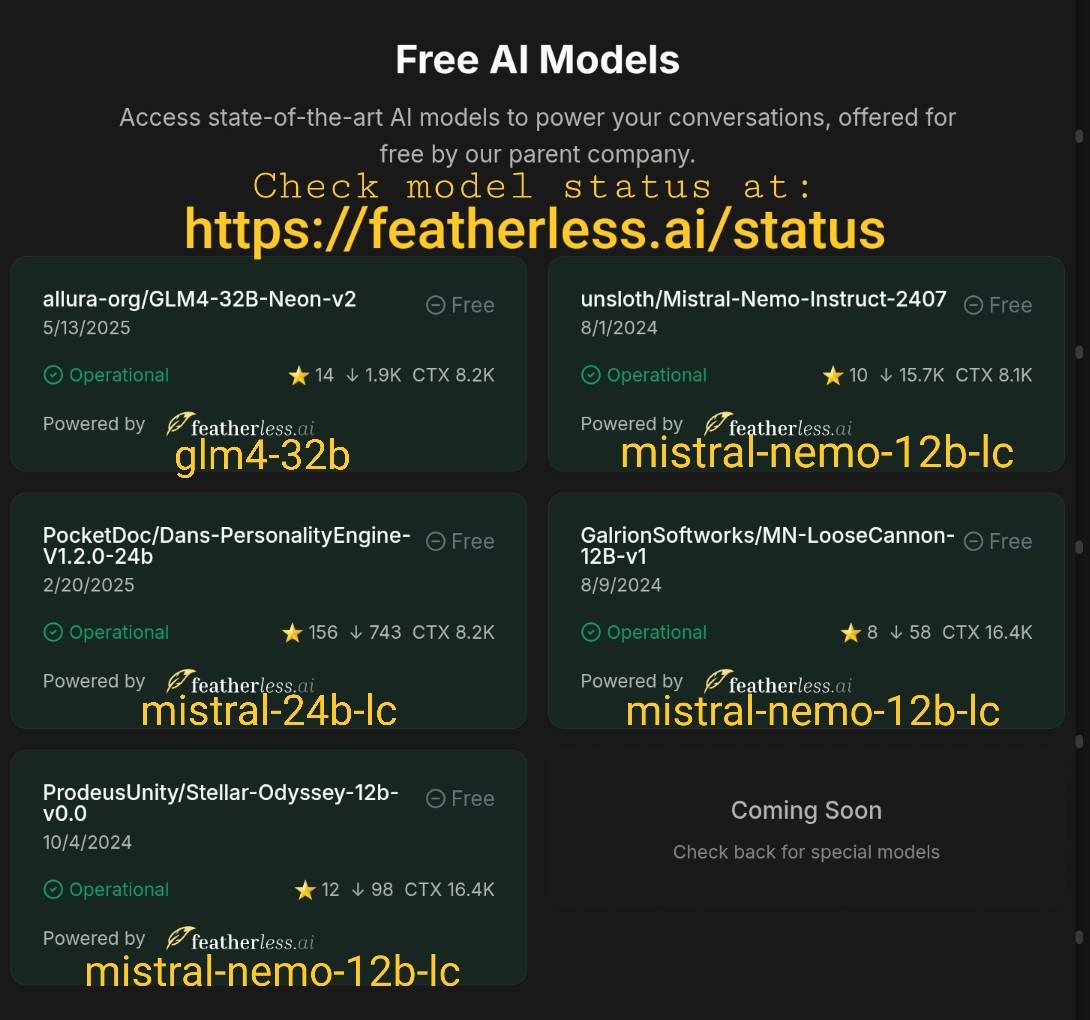

This means there's an issue with the model's connection. Check the model status page from Featherless for the latest updates on WyvernChat's models.

Here is which model corresponds to which Free queue option:

- Neon-v2: glm4-32b

- Mistral Nemo Instruct: mistral-nemo-12b-lc

- Dan's Personality Engine: mistral-24b-lc

- LooseCannon: mistral-nemo-12b-lc

- Stellar Odyssey: mistral-nemo-12b-lc

¶ ❓How do I stop a bot from repeating messages?

This issue happens frequently in the beginning of chats. Your character may be doing any of these:

- Repeating example messages provided by the creator.

- "Spitting" instructions: system prompt, descriptions of the bot itself, user's persona, or any other field.

- Loops and rewriting its greeting or any later messages.

¶ Solution 1 (easy & quick): use Toadplant's sampler preset.

- Follow this link to Toadplant's De-Repeater. Then, hit the Bookmark icon. The sampler preset will be added to your bookmarks.

- Navigate to the Chat page, then open the gear icon at the top right to open chat settings.

- Select the slider icon (second tab from the left) to open the Overrides tab.

- Scroll down to Parameter Preset Override. Select Bookmarks from the dropdown. Then, select Toadplant's De-Repeater.

- Select Save. Once you see a success notification, the preset will be applied to your chat.

¶ Solution 2 (a bit harder): change your settings for LLM

- On the Chat page, select the gear icon to open the right sidebar. Find the section Advanced AI settings, and open your samplers.

- Increase Rep Pen Range (Repetition Range) up to

512-1500 - Increase Repetition Penalty up to

1-1.15 - Increase Repetition Pen Slope up to

0.3-0.8

These solutions have been tested for LLAMA-3.1 finetunes, Magnum finetunes, and Mistral Finetunes.

¶ ❓Why do my character's messages end with <|im_end|>?

<|im_end|> is a special token used to mark the end of a message or turn in a conversation. Model developers use these to train AI for turn-based chats, helping it understand where one message stops and another starts). These marks for conversation structuring are named instruct templates.

<|im_end|> is a part of a chat template known as ChatML. ChatML is widely compatible with models like LLaMA, Qwen, and Mistral, even if they have their own templates. So ChatML is a default in many sharable presets on Wyvern.

¶ Why’s it showing up in my bot’s responses?

1. You’re using DeepSeek

DeepSeek is a special cookie and sassy rebel. Technically, DeepSeek has no instruct template. It cuts responses when needed but sometimes leaves <|im_end|> hanging in the end. No clear reason—it’s just DeepSeek being DeepSeek.

Solutions:

- Ban HTML in outputs: Chat -> Chat settings in the Right Side-Bar -> Miscellaneous -> Ban HTML in output

ON - Switch in-build instruct template from ChatML to Deepseek.

2. There's a missing stop-sequence for <|im_end|>

If you’ve been experimenting with your chat template, you might’ve forgotten to add <|im_end|> to the stop sequences. Without it, the model won’t know to cut that token from the output. The sight of it is LLM keep generating out of context (OOC) text after <|im_end|> with instructions or reasonings.

Solutions:

- Check your preset settings and add

<|im_end|>to the stop sequences. - Check the Debug Panel > Stop sequences

<|im_end|>. It should be on the list.

3. Are you certain It is exactly <|im_end|>. not something else?

Some models pull raw text from web sources with HTML or XML tags like , , or The model sees these in your chat history and tries to mimic them, spitting out variations like , < | im_end | >, or similar. Autocut only catches exactly <|im_end|> matches, so these weird variants slip through.

Solutions:

- Such outputs are rare; just manually edit it.

- Ban HTML in chat settings.

Don't see your question answered? Ask your question in our Discord server's

#supportchannel!